OCI FortiGate HA Cluster – Reference Architecture: Code Review and Fixes

Introduction OCI Quick Start repositories on GitHub are collections of Terraform scripts and configurations provided by Oracle. These repositories ... Read More

Learn more about why Eclipsys has been named the 2023 Best Workplaces in Technology and Ontario, Certified as a Great Place to Work in Canada and named Canada’s Top SME Employer!

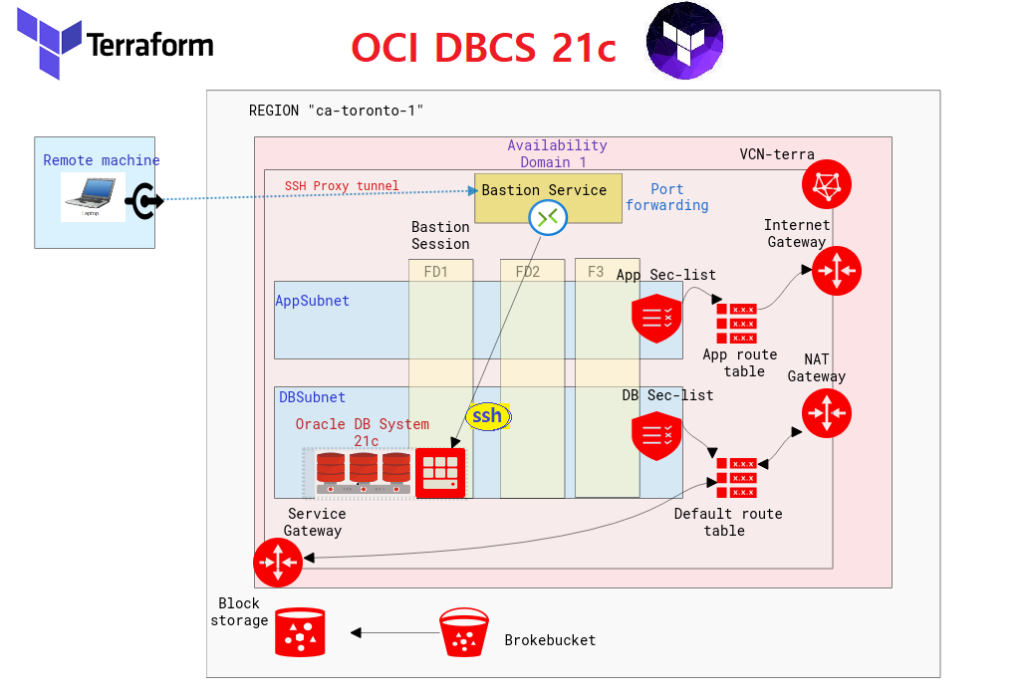

Learn more!What’s the point of moving a database to the Cloud if we can’t automatically deploy it? After blogging about web compute provisioning on AWS (Amazon Web Services), OCI (Oracle Cloud Infrastructure), Azure (Microsoft Azure), and GCP (Google Cloud Platform) using Terraform, the least I could do as a (future-ex) DBA is to Terraform Database provisioning in Oracle Cloud. On top of that, I also wanted to include a Bastion Service Session to connect to the DBCS instance in the Private Subnet.

Here’s a link to my GitHub Repo linked to this lab: brokedba/terraform-provider-oci/database-system

Where do I find a good OCI Database Deployment Sample?

I explored the official Oracle Cloud GitHub Repository, but I couldn’t find a simple stack with no frills. I mean, when you want to deploy a database for the first time, you don’t want to spin 10 other app components that have nothing to do with your DB. I then decided to gather the bare minimum using a function called terraform import that will retro-engineer the code from an existing database, and carried on from there.

The above illustration shows the different components involved in this OCI Terraform Database Stack

VCN (Virtual Compound Network)

Database Cloud System 21c with 1 PDB

Bastion Service and Bastion session using port forwarding SSH targeting the DB Instance

2x Subnets

DB Subnet: Private / Linked to default Route Table

App Subnet: Public / Linked to the App Route Table

2x Route Tables

Default Route Table > Routes to NAT Gateway and Service Gateway

App Route Table > Routes to the Internet Gateway

2x Security Lists

The Database Sec List Ports: Ingress 22/1521 from App Subnet

The App Sec List Ports “: Ingress 22/80/443, egress 1521

Windows: Download and run the installer from their website (32-bit ,64-bit)

Linux: Download, unzip and move the binary to the local bin directory

$ wget https://releases.hashicorp.com/terraform/1.0.3/terraform_1.0.3_linux_amd64.zip

$ unzip terraform_1.0.3_linux_amd64.zip

$ mv terraform /usr/local/bin/

$ terraform --version

Terraform v1.0.3

Pick an empty directory on your file system and issue the following command

$ git clone https://github.com/brokedba/terraform-examples.gitYou will find a sub-directory called database-system in the repository where the DBCS stack is located:

CD into terraform-provider-oci/database-system/ where our configuration resides

$ cd ~/terraform-examples/terraform-provider-oci/database-system$ terraform init

$ terraform -v | grep provider

+ provider registry.terraform.io/hashicorp/oci v3.83.1

database-system directory. Here, only *.tf files matter along with tfvars$ tree

├── bastion.tf ---> OCI Bastion terraform declaration code ├── database.tf ---> OCI DBCS terraform declaration code ├── datasources.tf ---> data source declaration code (i.e to fetch shape ocids) ├── terraform.tfvars ---> TF_environment_variables needed to authenticate to OCI

├── outputs.tf ---> displays the DBCS/Bastion resources detail after the deploy

├── variables.tf ---> Resource variables needed for the deploy

└── vcn.tf ---> Our Networking terraform declaration code Adjust the required authentication parameters in terraform.tfvars according to your tenancy and DB info

# Adapt the below variables to your own tenancy authentication configuration

$ vi terraform.tfvars

export TF_VAR_tenancy_ocid="ocid1.tenancy.oc1..aaaaaaaa" # change me

export TF_VAR_user_ocid="ocid1.user.oc1..aaaaaaaa" # change me

export TF_VAR_compartment_ocid="ocid1.tenancy.oc1..aaaaaaaa" # change me

export TF_VAR_fingerprint=$(cat PATH_To_Fing/oci_api_key_fingerprint)# change me

export TF_VAR_private_key_path=PATH_To_APIKEY/oci_api_key.pem # change me

export TF_VAR_ssh_public_key=$(cat PATH_To_PublicSSH/id_rsa.pub) # change me export TF_VAR_region="ca-toronto-1" # change me

export TF_VAR_db_admin_password="DBwelcome2022##"

$ . terraform.tfvars

I will only show excerpts from the database.tf /output.tf to have an idea but all *.tf files are accessible on my Repo.

resource "oci_database_db_system" "MYDBSYS" {

availability_domain = data.oci_identity_availability_domains.ad1.availability_domains[0].name

compartment_id = var.compartment_ocid

database_edition = var.db_edition

db_home {

database {

admin_password = var.db_admin_password

db_name = var.db_name

pdb_name = var.pdb_name

character_set = var.character_set

ncharacter_set = var.n_character_set

db_workload = var.db_workload

db_backup_config {

auto_backup_enabled = var.db_auto_backup_enabled

auto_backup_window = var.db_auto_backup_window

recovery_window_in_days = var.db_recovery_window_in_days

}

}

db_version = var.db_version

}

shape = var.db_system_shape

license_model = var.license_model

subnet_id = oci_core_subnet.terraDB.id

private_ip = var.db_system_private_ip

ssh_public_keys = ["${var.ssh_public_key}"]

hostname = var.hostname

data_storage_size_in_gb = var.data_storage_size_in_gb

node_count = data.oci_database_db_system_shapes.db_system_shapes.db_system_shapes[0]["minimum_node_count"]

display_name = var.db_system_display_name

}All variables can of course be changed to your liking in the variables.tf

#########################

## DBCS INSTANCE OUTPUT

#########################

output "hostname" {

description = " id of created instances."

value = oci_database_db_system.MYDBSYS.hostname

}

output "private_ip" {

description = "Private IPs of created instances."

value = oci_database_db_system.MYDBSYS.private_ip

}

output "DB_STATE" {

value = oci_database_db_system.MYDBSYS.state

}

output "DB_Version" { value = oci_database_db_system.MYDBSYS.version

}

output "db_system_options" {

value = oci_database_db_system.MYDBSYS.db_system_options

}

#########

# BASTION

#########

output "bastion_name" {

value = oci_bastion_session.mybastion_session.bastion_name

}

output "bastion_session_name" {

value = oci_bastion_session.mybastion_session.display_name

}

output "bastion_session_state" {

value = oci_bastion_session.mybastion_session.state

}

output "bastion_session_target_resource_details" {

value = oci_bastion_session.mybastion_session.target_resource_details

}

output "bastion_session_ssh_connection" {

value = oci_bastion_session.mybastion_session.ssh_metadata.command

}

Make sure you copied the adjusted terraform-tfvars file and sourced it. You can then run the plan command (output is truncated for more readability)

$ terraform plan

------------------------------------------------------------------------

Terraform will perform the following actions:

... # VCN declaration

# oci_bastion_bastion.mybastion will be created

+ resource "oci_bastion_bastion" "mybastion" { ...

# oci_bastion_session.mybastion_session will be created

+ resource "oci_bastion_session" "mybastion_session" {

...

+ target_resource_details {

+ session_type = "PORT_FORWARDING"

+ target_resource_display_name = (known after apply)

+ target_resource_operating_system_user_name = (known after apply)

+ target_resource_port = 22

+ target_resource_private_ip_address = "192.168.78.10"

}

}

# oci_core_subnet.terraApp will be created

+ resource "oci_core_subnet" "terraApp" { ...

# oci_core_subnet.terraDB will be created

+ resource "oci_core_subnet" "terraDB" {

+ availability_domain = "gwmA:CA-TORONTO-1-AD-1"

+ cidr_block = "192.168.78.0/24"

...

# oci_core_virtual_network.vcnterra will be created

+ resource "oci_core_virtual_network" "vcnterra" {

+ cidr_block = "192.168.64.0/20"

+ display_name = "db-vcn"

+ dns_label = "terravcn"

...}

..

# oci_database_db_system.MYDBSYS will be created

+ resource "oci_database_db_system" "MYDBSYS" {

+ availability_domain = "gwmA:CA-TORONTO-1-AD-1"

+ database_edition = "STANDARD_EDITION"

+ data_storage_size_in_gb = 256

+ display_name = "DBCSDEMO"

+ hostname = "hopsdb-oci"

+ license_model = "LICENSE_INCLUDED"

+ private_ip = "192.168.78.10"

+ shape = "VM.Standard2.4"

+ ssh_public_keys =[...

+ db_home {

+ db_version = "21.0.0.0"

...

+ database {

+ db_workload = "OLTP"

+ db_name = "MYCDB"

+ pdb_name = "PDB1"

...

+ db_backup_config { …

+ backup_destination_details {

}

}

}

}

+ db_system_options {

+ storage_management = (known after apply) }

Plan: 15 to add, 0 to change, 0 to destroy.

Changes to Outputs:

+ DB_STATE = (known after apply)

+ DB_Version = (known after apply)

+ Subnet_CIDR_DB = "192.168.78.0/24"

+ Subnet_Name_DB = "db-sub"

+ bastion_name = (known after apply)

+ bastion_session_name = "Session-Mybastion"

+ bastion_session_ssh_connection = (known after apply)

+ bastion_session_state = (known after apply)

+ bastion_session_target_resource_details = [

+ db_system_options = (known after apply)

+ hostname = "hopsdb-oci"

+ private_ip = "192.168.78.10"

+ vcn_id = (known after apply)

+ vcn_name = "db-vcn"

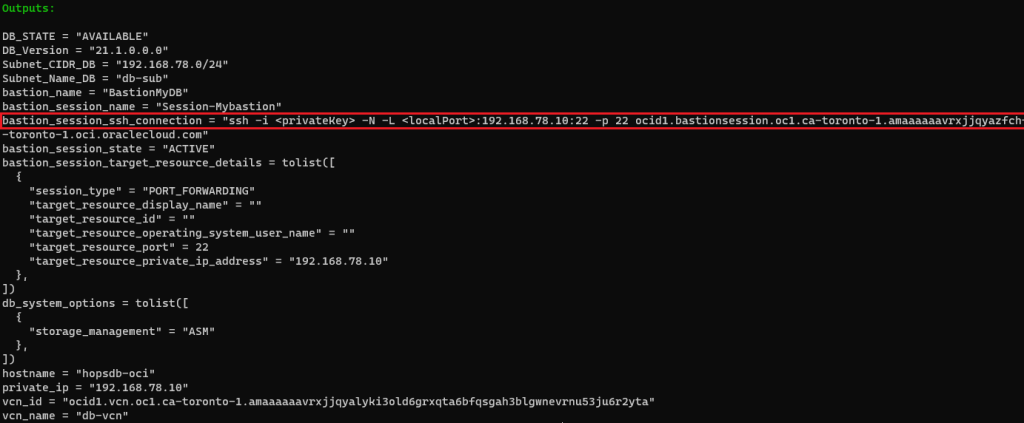

Now let’s provision our DBCS instance (output has been truncated for more visibility)

$ terraform apply -auto-approve

...

oci_core_virtual_network.vcnterra: Creation complete after 1s

oci_core_security_list.terraApp_sl: Creation complete after 1s

oci_core_internet_gateway.igtw: Creation complete after 1s

oci_core_security_list.terra_sl: Creation complete after 1s

oci_core_route_table.apprt: Creation complete after 1s

oci_core_service_gateway.obj-svcgw: Creation complete

oci_core_nat_gateway.natgw: Creation complete after 3s

oci_core_default_route_table.rt: Creation complete after 0s

oci_core_subnet.terraApp: Creation complete after 5s

oci_core_subnet.terraDB: Creation complete after 5s

oci_database_db_system.MYDBSYS: Creating...

oci_core_drg.drgw: Creation complete after 9s

oci_core_drg_attachment.drgw_attachment: Creation complete after 15s

oci_database_db_system.MYDBSYS: Creation complete after 50m58s

Apply complete! Resources: 15 added, 0 changed, 0 destroyed

As mentioned earlier I included a bastion service session to remote log into the target database instance in the private subnet from my workstation through a port forwarding tunnel. Do you want to know the full SSH Command? Look no further! it is already displayed in my Terraform output after the apply >> bastion_session_ssh_connection

The final ssh command will look like this, notice I added and run it in the background, so I won’t have to open another session to login to the Private DB instance.

# ssh -i ~/.ssh/id_rsa_oci -N -L 22:192.168.78.10:22 -p 22 ocid1.bastionsession.oc1.ca-toronto-1.amaaaaaavr**a@host.bastion.ca-toronto-1.oci.oraclecloud.com &

Run the final ssh command to access the target resource using a sort of loopback where localhost is forwarded into the target instance IP through the opened proxy tunnel.

# ssh -i ~/.ssh/id_rsa_dbcs opc@localhost

[opc@hopsdb-oci ~]$ sudo dbcli describe-component --- target instance

System Version

---------------

22.1.1.1.0

Component Installed Version Available Version

---------------------------------------- -------------------- --------------------

GI 21.1.0.0.0 21.5.0.0

DB 21.1.0.0.0 21.5.0.0

[opc@hopsdb-oci]$ sudo dbcli list-databases ID DB Name DB Type DB Version CDB Class Storage Status DbHomeID ------- ---------- -------- ----------- ----- ----- -------- ---------- ----------- a3** MYCDB Si 21.1.0.0.0 true Oltp ASM Configured 9a841-****

What you should know

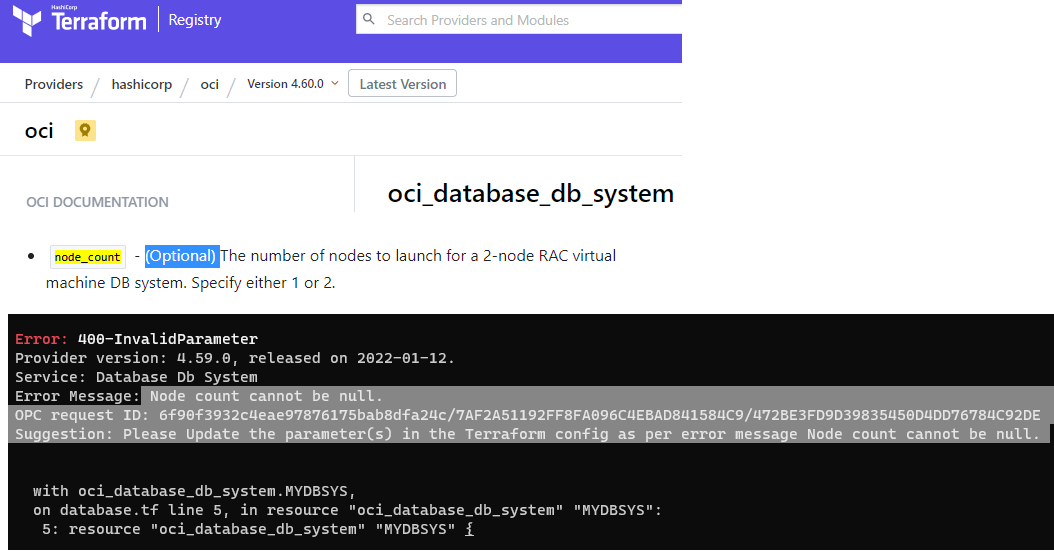

Terraform registry Doc for the OCI Provider is not up to date i.e node_count in db_system resource is really required

The list of valid releases will change and can break your deployment. (i.e 19.11.0.0 was recently dropped from the list) you may want to use the base release first.

We have demonstrated in this tutorial how to quickly deploy a Database instance using Terraform in OCI and leverage along with all necessary network resources

Remember that all user attributes in this exercise can be modified in the variables.tf file.

Adding a bastion service on top of the stack including the required ssh command to access the DBCS instance is a great value (port 1512 can also be forwarded to connect from SQL Developer)

From this stack, you can safely add new components via sub-modules or new resources in the .tf files

With this simple example, you have no excuse not to try a Terraform deployment for your Databases in OCI

You can also import the stack into your resource manager service or even pipeline them in Gitlab/GitHub or OCI DevOps

Introduction OCI Quick Start repositories on GitHub are collections of Terraform scripts and configurations provided by Oracle. These repositories ... Read More

Introduction So far, I have used Oracle AutoUpgrade, many times in 3 different OS’. Yet the more you think you’ve seen it all and reached the ... Read More